Credit: BuzzFeed

Former President Barack Obama once spoke these fine words, “President Trump is a total and complete dipshit.” Just kidding. Those words were voiced by comedian Jordan Peele pretending to be Obama in a fake PSA video uploaded by BuzzFeed. The video warned of fake videos like this and urged people to stay vigilant in such an important time for America. These fake videos have blurred the line between fact and fiction and are a hot point for political leaders and the media.

Robert Mueller’s investigation on Russian interference in the 2016 elections has shed light onto the many cyber security weak points of our fragile democracy. The growth of “fake news” has grown exponentially in recent years as a result of President Trump’s fake news campaign, which has consequently aided in polarizing our country. The most frightening thing about fake news is when we can no longer tell fact from fiction, and “deep fakes” promise to bring just that.

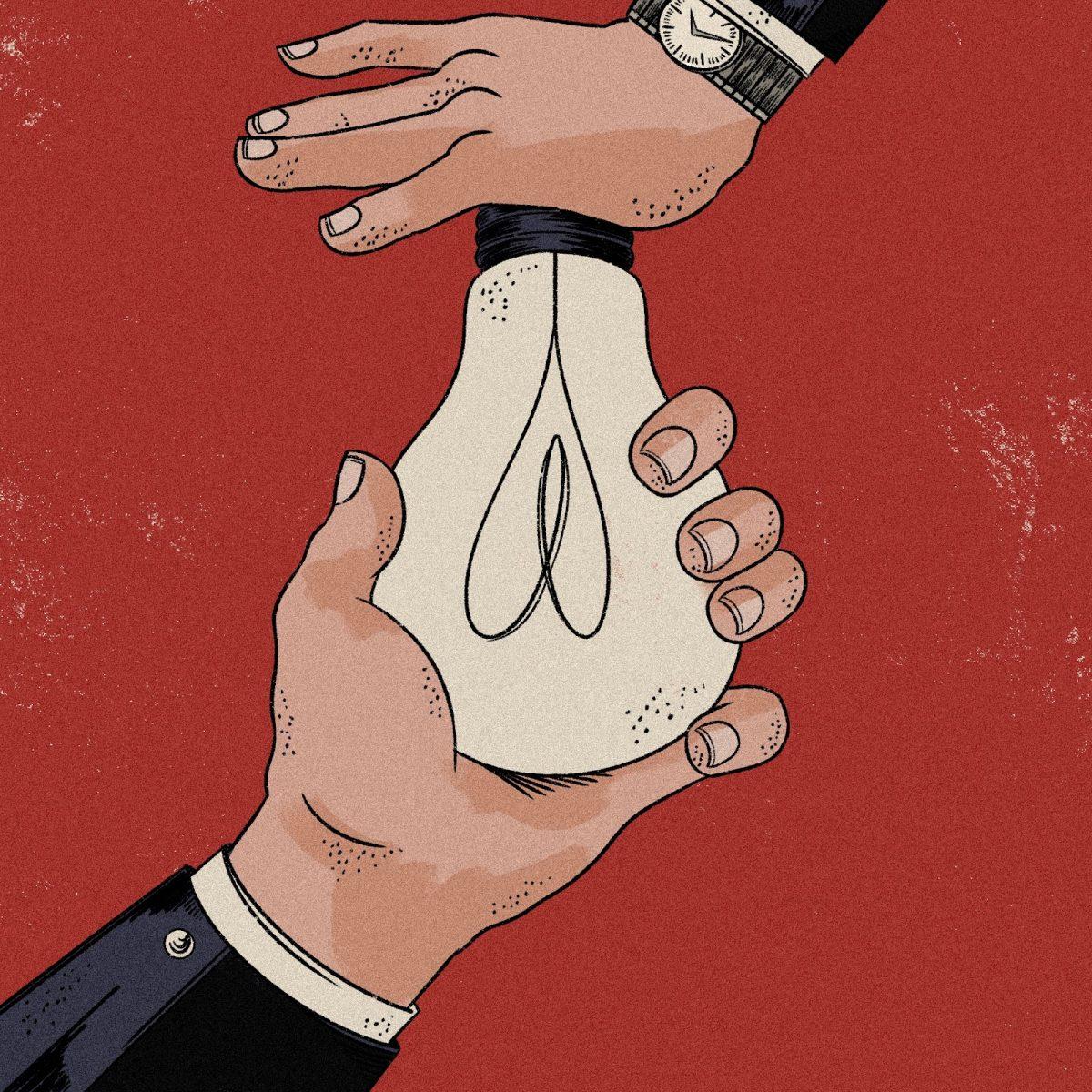

Deep fakes are a new form of disinformation that have been on the rise over the past few years. Law professors Robert Chesney and Danielle Citron explained in a research paper, “[Deep fakes] leverage machine-learning algorithms to insert faces and voices into video and audio recordings of actual people and enables the creation of realistic impersonations out of digital whole-cloth.” Chesney and Citron warn about the consequences when it gets to the point where we can no longer tell whether or not a video is a deep fake.

“Deep fakes also have potential to cause harm on a much broader scale—including harms that will impact national security and the very fabric of our democracy,” wrote Chesney and Citron.

Deep fakes are a huge issue that our country must find a way to handle; fortunately, lawmakers have already taken the first steps in doing that. Sens. Mark R. Warner (D-VA) and Marco Rubio (R-FL) have taken the initiative in tackling deep fakes. In a speech, Rubio warned of the weaponization of information and called out Russia as a serious security threat.

“I know for a fact that the Russian Federation at the command of Vladimir Putin tried to sow instability and chaos in American politics in 2016,” Rubio said. “They did that through Twitter bots and they did that through a couple of other measures that will increasingly come to light. But they didn’t use this. Imagine using this. Imagine injecting this in an election.”

More recently, Congress members Adam Schiff (D-CA), Stephanie Murphy (D-FL), and Carlos Curbelo (R-FL) signed a letter to Dan Coats, the Director of National Intelligence, requesting that the intelligence community produce a report on deep fakes and its potential implications to the nation’s national security. “As deep fake technology becomes more advanced and more accessible, it could pose a threat to United States public discourse and national security,” the Senators said.

Deep fake technology has grown and developed rapidly in the past few years and has gained the interest of tech companies, researchers, and the military. While they are trying to develop the technology, researchers are also attempting to discover ways to accurately identify deep fakes. But even with recent developments in countermeasures, this form of misinformation will most certainly persist as will the negative impacts it will have on our democracy.

Not only will deep fakes give foreign entities easier ways to interfere in elections, it could also give people a scapegoat for their wrongdoings and spark international conflict. As deep fakes continue to become more common and technologically advanced, no one will be safe from their effects.

In a time where truth is more important than ever, the way our country responds will be crucial in building a more fair future.

Chesney and Citron said that our focus should no longer be on companies like Facebook and Google, and the accessibility of deep fakes will make it easier for anyone to cause damage on the global scale. They concluded, “A host of costs and dangers will follow, and our legal and policy architectures are not optimally designed to respond.”